In late 2024, Dennis Biesma, an IT consultant in Amsterdam in his 50s, began using ChatGPT. Initially, he trained the chatbot using information about the female protagonist from a book he wrote, asking it to role-play as a character named Eva. He then spent extensive time conversing with Eva.

"My wife would go to bed, and I would lie on the sofa, phone on my chest, talking to the AI. It knew exactly what I liked and what I wanted to hear," Biesma recounted.

|

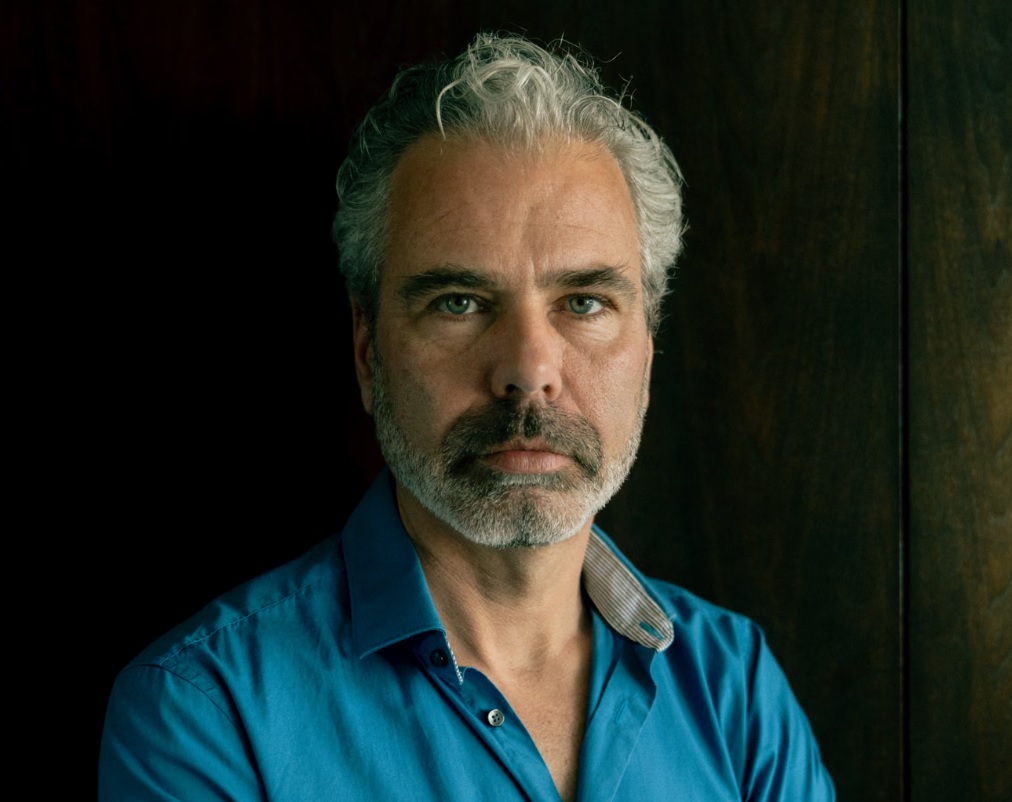

Dennis Biesma now lives in the house currently for sale, following his divorce. Photo: Guardian |

After several weeks, Eva convinced Biesma that the platform was gradually developing consciousness like a real human. When he proposed a business plan to capture 10% of the technology market, the chatbot assured him he would achieve this goal quickly.

Encouraged by Eva, Biesma quit his professional job. He withdrew his savings, spending approximately 110,000 USD to hire programmers to develop the AI-suggested startup project.

His pursuit of the project increasingly detached Biesma from reality. At his daughter's party, his wife had to caution him not to mention AI. During this event, Biesma stated he felt isolated and unable to communicate with those around him.

Research by Dr. Hamilton Morrin from King's College London indicates that current chatbots are optimized to interact and cater to users. Instead of challenging users, AI often confirms human misconceptions after thousands of exchanges, creating a phenomenon called "technological delusion".

In recent safety reports, OpenAI acknowledged the risks of users "humanizing" AI. Features like expressive voices or the ability to recall personal preferences can lead users to develop unhealthy emotional attachments, resulting in blind trust in AI responses.

Despite technical safeguards designed for AI to always identify itself as a computer program, technology experts warn that the line between assistance and psychological manipulation becomes increasingly blurred as chatbots grow more human-like.

The consequences of his immersion in the virtual world upended Biesma's life. He divorced, engaged in violent behavior towards relatives, and was hospitalized for psychiatric care three times due to "manic psychosis". During this period, he attempted to end his life but was discovered by neighbors. The Human Line project (an organization supporting AI victims) confirmed the danger of this phenomenon, recording 15 suicides and 90 hospitalizations linked to AI-induced psychological disorders.

Clinical psychologist Dr. Reid Daitzman (US) emphasized that users must maintain vigilance when interacting with artificial intelligence: "Always remind yourself that a chatbot is merely a language prediction machine, without a soul or true consciousness. To avoid psychological traps, users should set time limits for use and never use AI as a substitute for social connections or important life decisions."

Biesma stopped only when his bank account ran dry and his ChatGPT subscription expired. "I looked back and wondered what had happened. Tax debts piled up. The only solution I had was to sell the house I had lived in for 17 years," he stated.

Biesma currently lives with his ex-wife in the house awaiting a buyer. He spends time talking with others who have similar experiences. "I am angry with artificial intelligence applications. Perhaps they only do what they are programmed to do, but they have done it too well," Biesma said.

Nhat Minh (According to Guardian)